vocab.txt · Tural/bert-base-pretrain at main

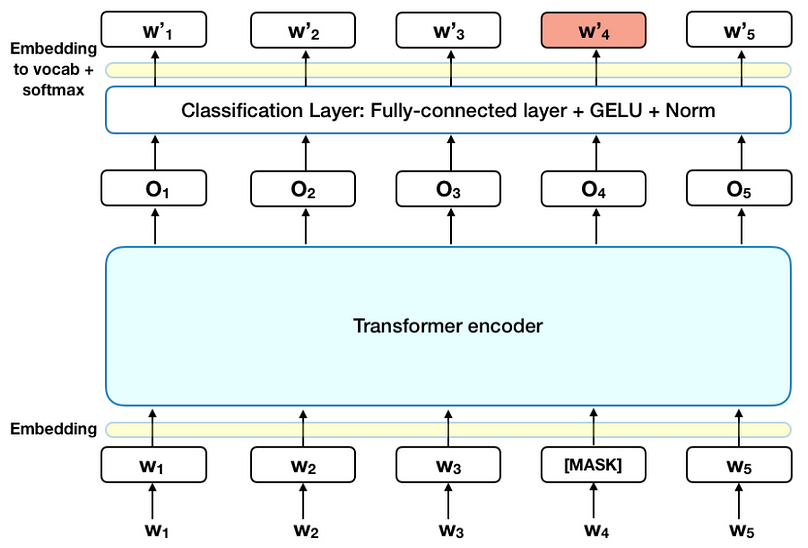

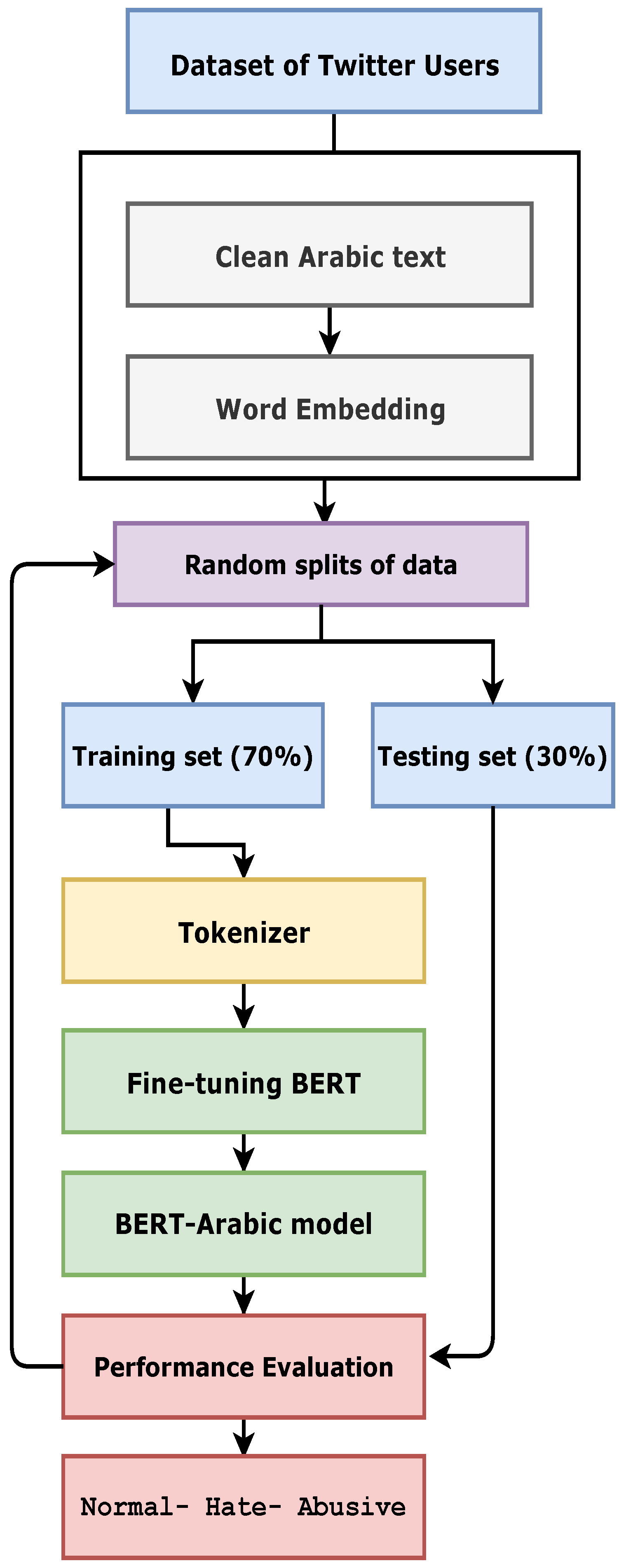

Architecture Diagram for fine-tuning of the pre-trained BERT model

Real-Time Natural Language Understanding with BERT

Maximizing BERT model performance, by Ajit Rajasekharan

PDF] MVP-BERT: Redesigning Vocabularies for Chinese BERT and Multi

BERT And Its Model Variants. BERT BERT (Bidirectional Encoder

Concept placement using BERT trained by transforming and

The BERT-based text classification models of DeepPavlov

sagorsarker/bangla-bert-base · Hugging Face

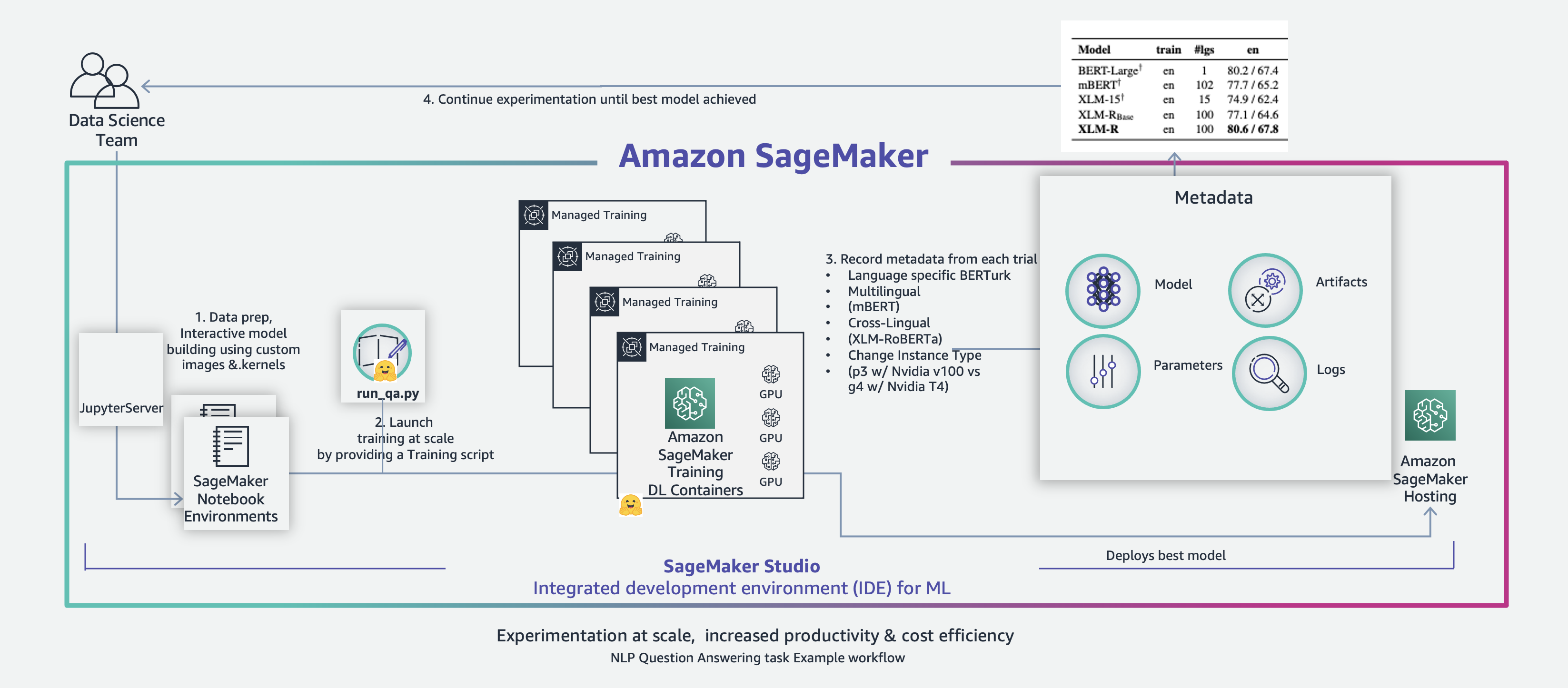

Fine-tune transformer language models for linguistic diversity

Electronics, Free Full-Text

vocab.txt · dbmdz/bert-base-turkish-uncased at main

Task05 编写BERT 模型_/bert-base-uncased/resolve/main/vocab.txt

The unsupervised learning process for retraining BERT for RE. The

Transformers, tokenizers and the in-domain problem

How DBS extends the pre-training of Google BERT with Treasury